Every now and then, a company faces a moment of reckoning. There is a fork in the road and the choices are not clear. One path continues on the road you’re on. Things are fine… but not great. Still, it’s a safe path. No one gets fired for continuing with what’s worked in the past. Even if it’s not working that well now. Margins are eroding, competition is heating up. Maybe it’s not exactly safe, but it’s known.

And then there’s another road. Rocky, steep, mostly untrodden. It’s not clear where it goes, but… it’s gotta be better than the road you’re on, right? Right?

When you’re in a startup and things stall, it’s worth examining your strategy in the cold light of day. Every startup is hard, so be careful of jumping paths to chase some shiny new thing. Don’t mistake temporary setbacks for fundamental blockers. Losing a customer or a key employee is a problem, but it’s not insurmountable. Sometimes, the best option is to continue to grind away.

But if the current path isn’t going to get you the growth you need to sustain the business long term, you should carefully examine new options. This is especially true when there is a fundamental shift in the computing landscape.

Tsunami of Change

Major changes in computing happen about every ten years and their impact is massive. Whether it was the shift to microcomputers, graphical user interfaces, client-server, the web, cloud computing, mobile computing each of these changes were like a tidal wave on the industry. There was a before and an after and companies either embraced the change or got washed away. Incumbents were disrupted as new opportunities emerged for more agile competitors.

I suspect AI will have a similar impact reshaping the industry. If you’re a software company and you don’t have an AI strategy, it’s like not having a web strategy in 1999.

That said, not every growth trend breaks into the mainstream. Network computing, internet of things, block chain, Bitcoin, NFTs, 3D printing and virtual reality were hugely hyped and are now niche markets at best. Why? Because they solved problems that few people had.

When you’re considering a change in strategy, whether based on new technology or competitive dynamics, how do you tell whether it’s worth the risk? There’s no sure fire way, but when in doubt, I recommend validating with prospects and customers.

• Are you seeing evidence of the opportunity among your existing customers?

• Are the benefits to new customers compelling enough to get them to switch?

• Do you have a realistic assessment of the competition?

• What gives you a competitive advantage in this new opportunity?

• Do you understand the required changes in sales and marketing?

Also consider how to tackle the opportunity. Is this an either / or situation? Do you have enough resources to tackle the new opportunity while continuing to operate your existing business? Does it require adding new people to the organization? Can you afford it?

Bring Your A-Game

If it’s a truly strategic initiative that can improve the fate of the company, you must make it a top priority. That means, put your best people on it. Creating something new will probably take longer than you expect, so the most important thing is start.

When you’re building a new product, you want a tight, focused, experienced team with the necessary skills to bring the product to market. Ideally, you should have a strong product manager from the beginning so you focus the team on the most critical elements required for success.

The best results I have seen start with a small, focused team that is used to working under pressure. Let the team gain momentum before you add to it. Too many people at the onset can lead to a lot of needless debate and discussion. A small, nimble team is likely to move faster than a large one. Be sure you protect the team from bureaucratic forces.

Windows Changed Everything

I remember facing a “burn the boats” moment early in my career at Borland. I’d been working as a product manager on Turbo Pascal for a couple of years. It was a good product, originally created by Anders Hejlsberg, one of the ten best programmers in the world. Turbo Pascal was Borland’s first breakout product and it had generated hundreds of million in revenues over ten years. However, taste in programming languages change, and C++ had become the hot new thing. That was fine for Borland as we also had a great C++ compiler.

More daunting was the fact that Windows, after languishing for several years, was finally gaining traction. Unfortunately, programming Windows was far more complicated than traditional text-based applications on MS-DOS. It took over 100 lines of C code to create “Hello, World.” Ugh. Turbo Pascal for Windows, which included an object-oriented library, cut it down to 25 lines, but it was still too complex.

There was the risk that an entire generation of MS-DOS programmers (myself included) would be left behind. Meanwhile, Microsoft had released Visual Basic for Windows. While it had limitations (no data access, slow performance, limited extensibility) it made Windows programming accessible to mortals.

At the same time, corporate customers were moving away from mainframes toward client/server computing, using Windows for the front-end interface . The back-end data and business logic was stored in relational database servers such as Oracle, IBM DB2 and Microsoft SQL Server.

The Birth of Delphi

The executive team at Borland was trying to figure out how to address the opportunity around Windows and client/server. The options were not great. C++ was too complicated. Database tools like Borland Paradox and dBase were too limited.

We knew we were up against it. Jobs were at stake. We felt pressure that we had deliver something significant. As has often been said, “Nothing concentrates the mind like the prospect of being hanged at dawn.”

So I rallied Anders and engineering manager Gary Whizin and convinced them that we needed to step up. We didn’t know anything about client/server computing, but then, neither did anyone else. But we knew it was important.

For our business to thrive, we needed to tackle the challenge of making client/server and GUI programming dramatically easier. New products, the so-called fourth generation languages (4GLs) like SQLWindows and PowerBuilder, suffered from limitations in performance and extensibility. Like Visual Basic, they were built on slow interpreters. We knew we could deliver a 10x performance gain by using native code compilers that had been our hallmark at Borland.

So Gary, Anders and I started a skunkworks project within Borland to create what would become Delphi. Philippe Kahn, the founder and CEO of the company referred to the project by the not-so-subtle codename VBK (Visual Basic Killer.) But we saw Delphi as much more than that. We were on a mission to make client/server easy for developers.

I was the product manager, and since I had nothing to show in the early days, I went out and spoke to customers about what they were trying to do. What I found over and over again, was that the 4GL tools made some things easier, but they eventually hit a wall. If your 4GL didn’t have a built-in object for what you needed, you couldn’t create one. You were limited to the components that were hard-coded into the system.

The life of any 1.0 software project (or any startup) is rarely smooth sailing. Instead, it’s a series of battles, inspiring wins and devastating blows.

It was about a year into the development, when we hit a major setback. A developer had left the company and when Anders and Chuck Jazdzewski reviewed the database components he’d been working on, they found it far too convoluted. It was going to take months to rewrite.

At that point, just about every person on the project came into my office and asked if we couldn’t just ship the desktop product, without the fancy client/server features.

Whenever I got that question, I drew a graph that showed how our desktop sales would initially be larger than client/server, but client/server revenues would grow at a faster rate and ultimately eclipse desktop sales. Then they would leave my office. Maybe they believed me, maybe they didn’t. But they understood the mission. We were building a product that would define the future of the company and that meant client/server. There was no turning back.

Of course, when they left my office, I’d be staring at the same graph, wondering. The graph represented our strategy but who could predict the outcome?

As the project gathered steam, we were able to add more people, but it remained a small, fierce team. It took a lot of 60-70 hour weeks across the entire team. We weren’t doing it because we had to, we were doing it because we wanted to. We weren’t so much managed as let loose. Gary was perhaps the most intense engineering manager I ever worked with. We knew Delphi had to be great and none of us would settle for less.

We launched Delphi February 14, 1995 at the Software Development conference at Moscone Center, along with nearly a thousand geeks who couldn’t get dates. The next day, the Borland booth was mobbed. We knew it was a good product, but even we were surprised at the response.

First year revenues for Delphi were around $70m ($140m adjusted for inflation) and client/server revenues eclipsed the desktop revenues in the second year. Borland returned to profitability.

Delphi 1.0 compiled code at about 34,000 lines per minute. Twelve months later, we released the 32 bit version of Delphi 2.0 for Windows 95 and NT that was twice as fast. That pace of innovation continued for many years and even though Borland is long gone, there’s still a vibrant Delphi community. I remain grateful for what was an intense, challenging and extremely fun time.

And we really did save the company.

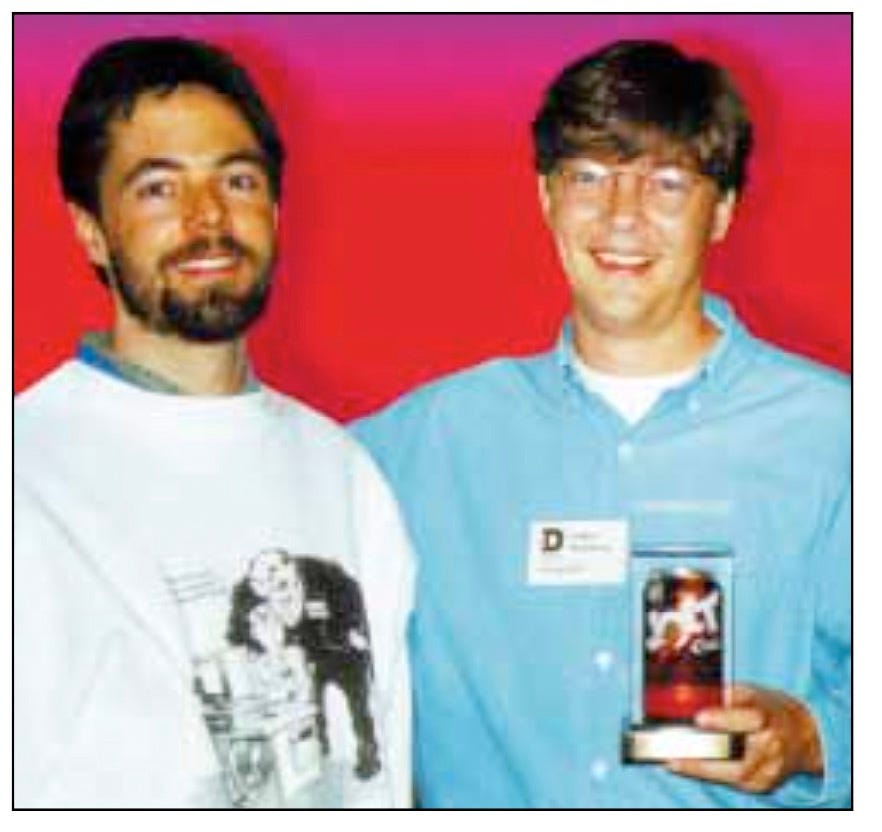

Here’s a photo of Anders and I from November, 1995 when Delphi won the prestigious Software Development Jolt Cola Award beating C++, Java and Visual Basic. I still have that trophy on my shelf. Later on, Anders joined Microsoft as a principal engineer where he was responsible for C#, .Net and TypeScript. Not too bad!